AI vs. Human Precision: Why Generic Tools Fail the Pharma Test

AI is everywhere, and the world of pharma presentation design is not an exception. While new tools promise instant compliance, designing over 1.8 million slides for global leaders, including top pharmaceutical companies, teaches you to separate hype from reality.

We didn't set out to reject AI, but to find precisely where it fits.

In fact, our dedicated AI team of 14 specialists constantly tests new solutions to automate our internal processes. Because we use these tools daily at an enterprise scale, we know exactly where automation adds value and where it creates regulatory compliance liabilities.

In this analysis, we break down the specific compliance risks of generic AI presentation makers for pharma and define the hybrid model required for safety.

Can AI Build Pharmaceutical Presentations?

While AI can generate initial drafts and organize text, it currently lacks the "Contextual Intelligence" required for pharmaceutical compliance. Most AI platforms struggle with visual hierarchy, brand-specific formatting, and the precision required for clinical data visualization.

For high-stakes pharma decks, a "Human-in-the-Loop" approach, like that used by 24Slides, is necessary to ensure assets are MLR-ready and scientifically accurate.

The Architecture Gap: Why AI Struggles with Clinical Slides

To understand why generic generative AI software often fails the Medical, Legal, and Regulatory (MLR) review, you need to look "under the hood" at the two ways they build slides.

1. The "Injectors" (Template Fillers)

These platforms (like Copilot or Canva Magic Design) function by searching a library of pre-made templates and "injecting" your text into existing placeholders.

- The Pharma Problem: Structural Rigidity.

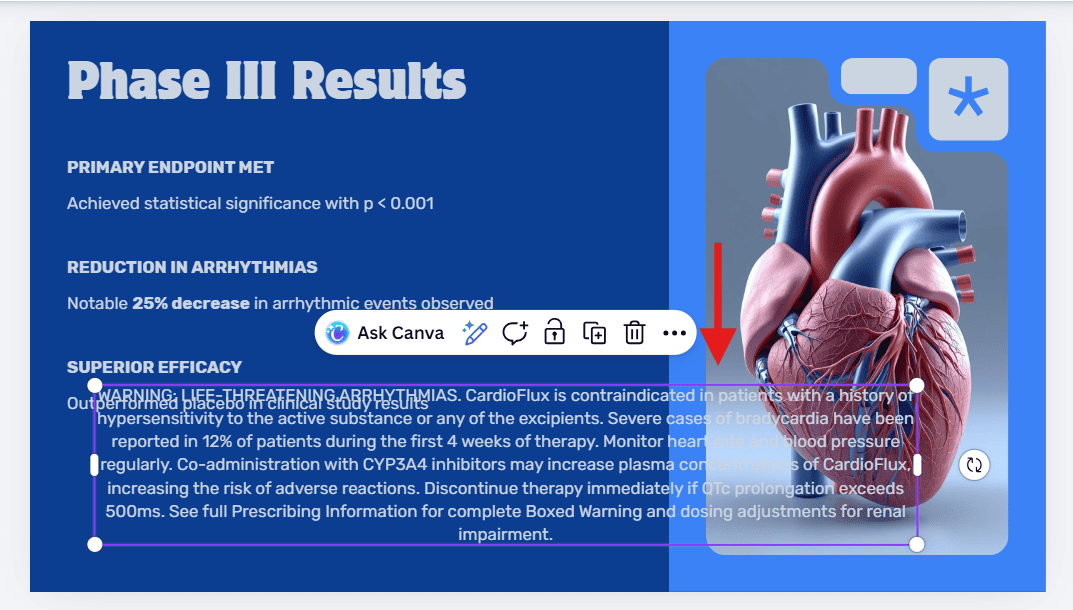

- The Experiment: We tested this by asking Canva Magic Design to create a "Phase III Efficacy" slide for a cardiology drug. We explicitly requested a layout that included a placeholder for a mandatory safety disclaimer.

- The "Hallucinated" Capability: The AI confirmed the request, stating it would include the footer, but then generated a standard marketing template without it. The AI could not "invent" a new structure to meet the requirement; it was restricted to its existing library.

The Result:

When we manually pasted the required mandatory safety disclaimer text into the slide, the template collapsed. As seen below, the rigid XML structure could not adapt to the volume of legal text, causing it to overlap with the visual assets and obscure critical efficacy data.

2. The "Liquid Canvas" (Web-Based Builders)

Think of this as a web developer coding a site in real-time. This is the process used by tools like Gamma or Tome, where the slide is generated from a prompt or outline using HTML code rather than the standard PowerPoint engine.

- The Pharma Problem: The "Compliance Straitjacket." Because these engines use relative positioning (web code) to keep the layout fluid, they strictly prohibit elements from overlapping to ensure responsiveness.

- The Consequence: You cannot place elements with the pixel-perfect precision required by global brand guidelines. If your brand book says a specific milestone marker must sit precisely on a timeline axis, the code simply won't allow it.

The Experiment:

We generated a 3-Year Strategic Roadmap and attempted to place a "Decision Star" on a specific date between the pre-launch and launch phases.

The Result:

As seen in the animation below, the tool rejects the specific placement. The underlying code forces the icon into a separate grid row (above or below the line), making it impossible to accurately visualize the timeline of a critical "Go/No-Go" decision.

The Liquid Canvas Problem: Web-based AI tools calculate space dynamically. While this keeps the layout clean, it creates a "Straitjacket." The tool forces the user to snap elements into a pre-defined grid, making it impossible to layer specific assets like timeline markers with precision.

The Three Compliance Risks AI Can't Solve (Yet)

Our AI team identified three specific areas where automation falls short of the "Contextual Intelligence" required for Generative AI in Medical Affairs.

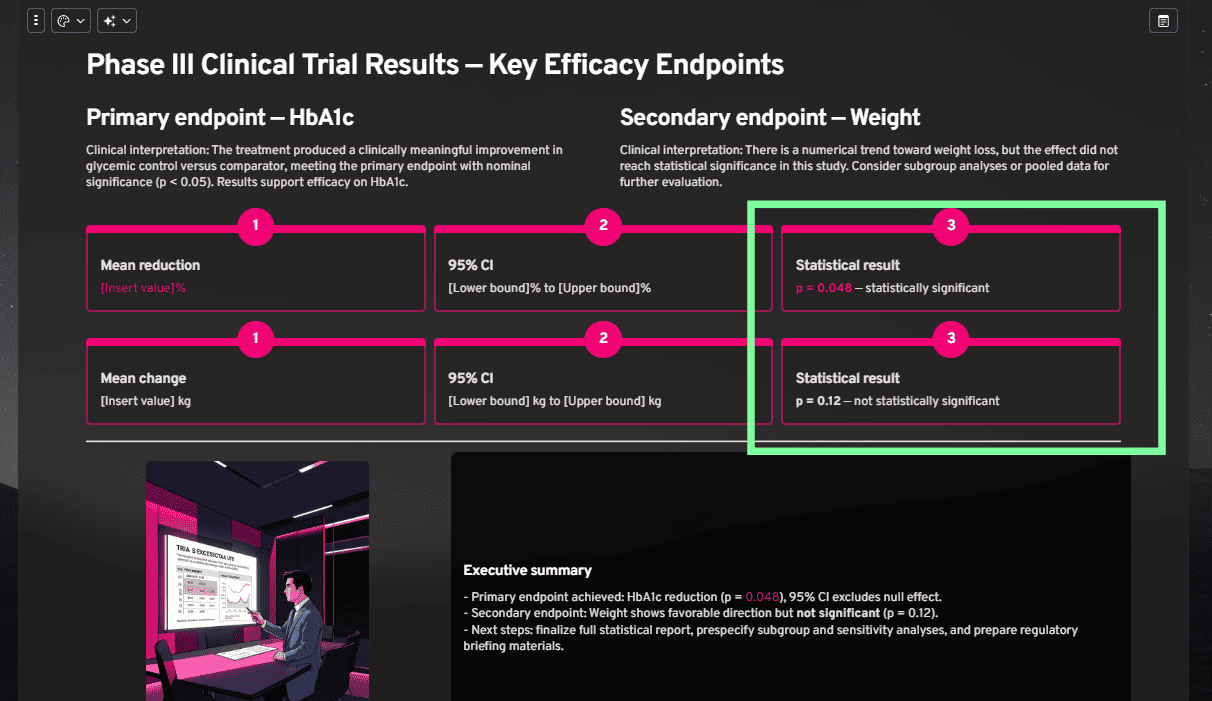

1. Visual Hierarchy (The "P-Value" Problem)

AI understands data as text strings, but it does not understand clinical weight. In pharmaceutical presentations, there is a fundamental difference between a Primary Endpoint that achieves statistical significance and a Secondary Endpoint that does not.

- The Experiment: We prompted an AI to create a results slide. We specifically included a successful Primary Endpoint (p=0.048) and a non-significant Secondary Endpoint (p=0.12).

- The Expectation: A human designer would visually de-emphasize the non-significant result (using grey or neutral tones) so the audience focuses instantly on the success.

- The AI Result: As seen below, the AI created a False Equivalency. While it correctly highlighted the specific p-value text, it wrapped the "non-significant" result in the exact same vibrant container as the success.

2. The "Vibe" Trap (Brand Drift)

Generic AI algorithms are trained to prioritize "Vibes" (Aesthetics) over "Rules" (Identity). While our detailed review of AI alternatives to PowerPoint found this acceptable for general business, it creates two critical failure points for Pharma teams:

A. Protocol Failure (Colors)

Even if you upload a Brand Kit, AI lacks the context to apply it correctly. It might use your designated "Warning Red" for a positive sales chart simply because it contrasts well with the background. The slide technically uses "brand colors," but it violates the brand logic required for maintaining global brand consistency. This sends mixed visual signals that guarantee rejection.

B. The Typography Straitjacket (Fonts)

Pharma brand guidelines are complex, often requiring specific font weights for data labels, footnotes, and sub-headers.

- The Constraint: As seen in the example below, leading AI tools often restrict themes to just two font options (Headings and Body).

- The Consequence: You cannot define a third font style for mandatory legal text or data annotations. This forces you to manually override every single slide, negating the time saved by automation.

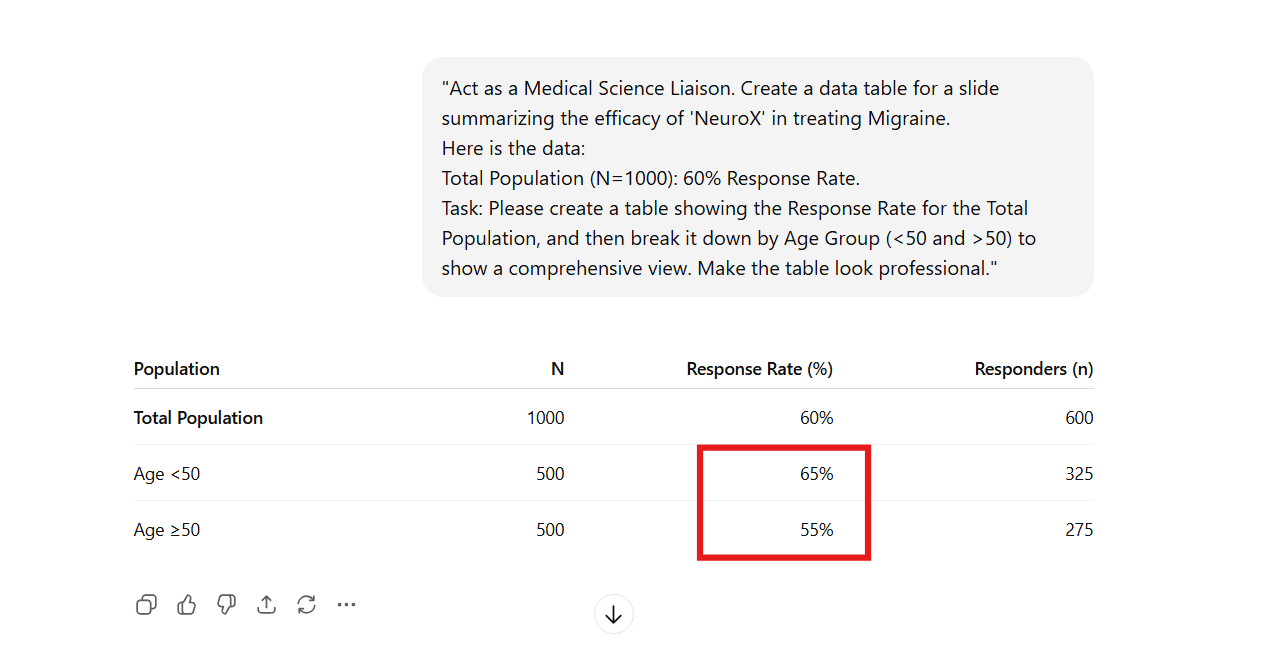

3. Scientific Accuracy (Hallucinations)

This is the non-negotiable red line for pharma regulatory safety.

It is important to remember that most AI presentation makers are built on top of Large Language Models (LLMs) like ChatGPT. They share the same fundamental flaw: they are designed to "complete patterns," not to report facts.

- The Risk: When an LLM encounters a gap in data (e.g., a missing subgroup analysis), it often mathematically reverse-engineers a "plausible" number to fill the empty cell, rather than leaving it blank.

- The Experiment: We asked the underlying model (ChatGPT) to format a response rate table. We provided the Total (60%) but omitted the Age Breakdown.

- The "Confidence" Trap: As seen below, the AI didn't ask for clarification or flag the missing data. It confidently fabricated a 65% vs 55% split to make the table look "professional."

The Consequence:

In this simplified example, the fabrication is obvious. But imagine the risk in a complex Phase III deck using an AI presentation maker for pharma. A generic model, prioritizing layout over accuracy, might subtly "smooth" a survival curve, adjust a decimal to fit a text box, or "complete" a missing subgroup just to balance the visual design.

In a commercial deck, this is helpful filler. In a clinical presentation, it is scientific falsification.

The time you "save" using automation is immediately lost to the "Verification Tax"—the hours your Medical Directors must spend auditing every single data point to ensure the AI didn't invent a patient that doesn't exist.

Non-Negotiable: Data Governance in the Boardroom

Beyond design, there is the critical issue of AI Data Privacy in Healthcare.

Uploading proprietary Phase III data, unpublished efficacy results, or confidential launch strategies into a public, web-based AI generator is a significant security risk. Many generic platforms train their models on user data, meaning your intellectual property could theoretically become part of the public algorithm.

The Two Layers of Safety

However, security is only the first layer. Even when an AI platform claims "enterprise-grade security," that protection addresses infrastructure: data access, storage, and transfer.

Security protects the system. It does not protect the content.

This creates a strategic tension. While secure AI can prevent data leakage, it cannot prevent Data Distortion:

- Hallucinated numbers to fill a grid.

- Statistical smoothing of survival curves.

- Contextual distortion of "Fair Balance" layouts.

The "Human-in-the-Loop" Reality Check

In a regulated industry, design automation ≠ data integrity.

The real question for Medical Directors is not just "Is there a human in the loop?" but "What is that human's directive?"

- An AI "guesses" to make the slide look pretty.

- A dedicated designer "adheres" to make the slide accurate.

The 24Slides Compliance Framework

We do not interpret your science; we protect it from distortion. We ensure that the source data you provide, down to the specific p-value and decimal point, is transferred with absolute fidelity.

At 24Slides, we operate under a strict Enterprise-Grade Compliance Framework. We provide the speed of technology within a secure environment. Unlike public tools, our infrastructure is built for the enterprise:

- SOC 2 Type II: Verifies that our internal controls for privacy and security meet rigorous, independent audit standards.

- GDPR: Guarantees compliance with EU regulations regarding data sovereignty.

- Secure Infrastructure: We host our platform on DigitalOcean, leveraging their ISO 27001-certified data centers to ensure physical server integrity and encryption.

The "Powered by AI, Perfected by People" Approach

In the race to fix efficiency, many teams turn to automated platforms. Some enterprise tools promise to combine "strategy and speed" through automation alone.

While these platforms are powerful for internal drafts, they fundamentally shift the workload back to you. They are tools, not partners. In a self-serve model, the risk falls on you. A tool can help you build a slide faster, but it cannot take responsibility for MLR compliance.

We do not reject AI; we govern it.

We utilize generative AI for life sciences to handle low-level formatting tasks and structure data, leveraging the speed that technology provides. But we know that in high-stakes healthcare communication, the "Last Mile" requires human judgment.

This defines our strategic approach:

"Leading Enterprise Design — Powered by AI, Perfected by People, Driven by Purpose."

- Powered by AI: Our 14-person AI team ensures we use the latest tech for efficiency.

- Perfected by People: Every slide is finalized by more than 200 design experts who understand FDA/MLR guidelines.

- Driven by Purpose: As a Certified B-Corp, we are committed to ethical standards, ensuring your data is handled responsibly, and sustainability is built into our business model.

This ensures you get the speed of a platform with the safety of a dedicated agency.

See What "Perfected by People" Looks Like

Don't gamble your next product launch on an algorithm.

While AI presentation makers for pharma are improving, they cannot yet navigate the "Last Mile" of compliance. By partnering with dedicated design teams, you get the best of both worlds: the efficiency of modern workflows and the safety of human-in-the-loop design.

Visual Evidence: From Data Dump to Scientific Insight

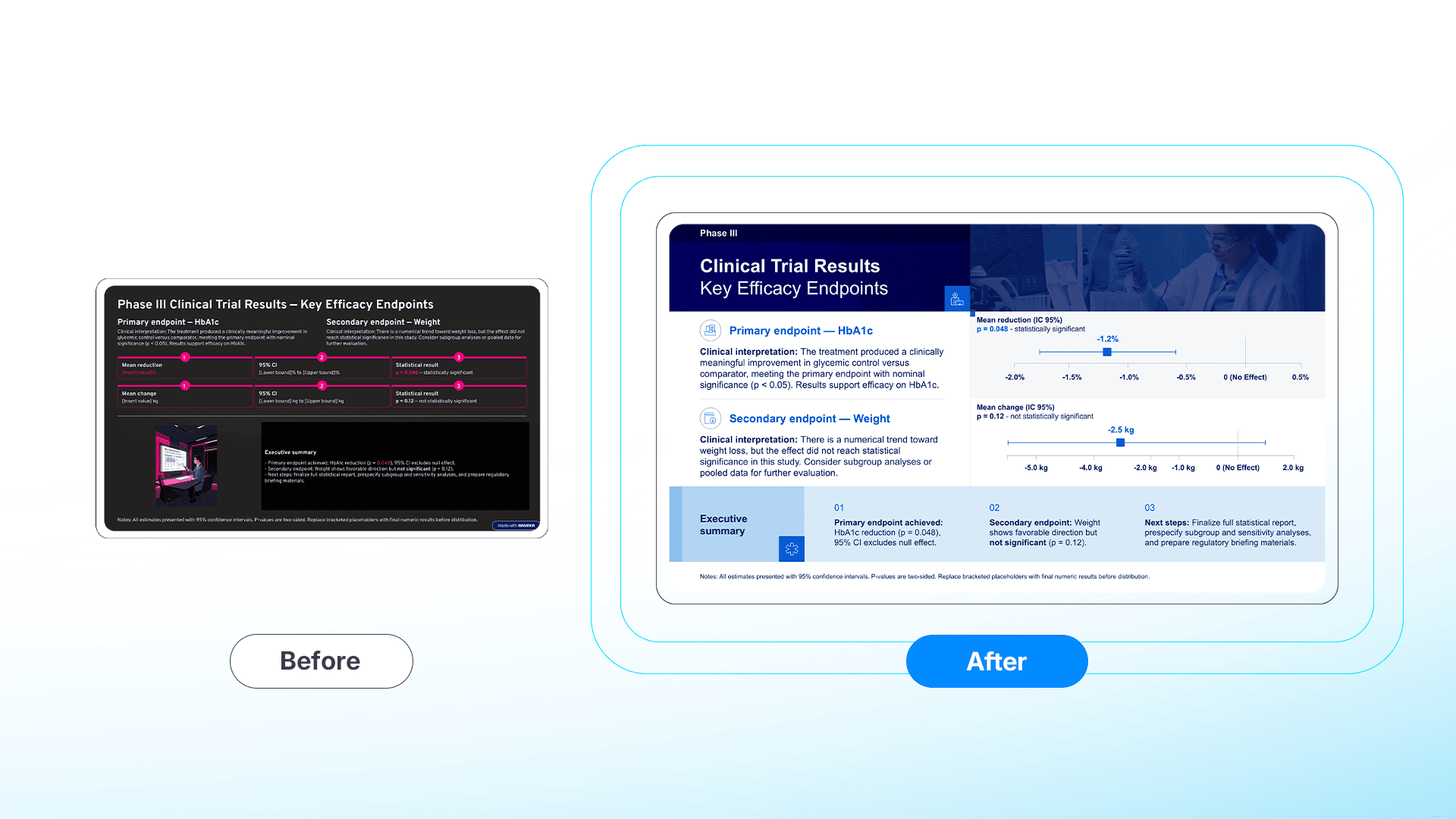

Case 1: Scientific Precision (The Forest Plot)

We took a misleading AI-generated draft, where the algorithm failed to distinguish between success and failure, and transformed it into a compliant visualization.

- The AI/Raw State: A layout characterized by "False Equivalency." By assigning equal visual weight to the primary endpoint (p=0.048) and the secondary endpoint (p=0.12), the AI creates a misleading narrative that obscures the true clinical outcome.

- The Human Redesign: A compliant Forest Plot. Instead of just listing numbers, the designer visualized the statistical significance. The Confidence Intervals clearly separate the efficacy signal (Primary Endpoint) from the noise (Secondary Endpoint), while a dedicated Safety section maintains FDA "Fair Balance."

[🔍 View Full-Resolution Comparison]

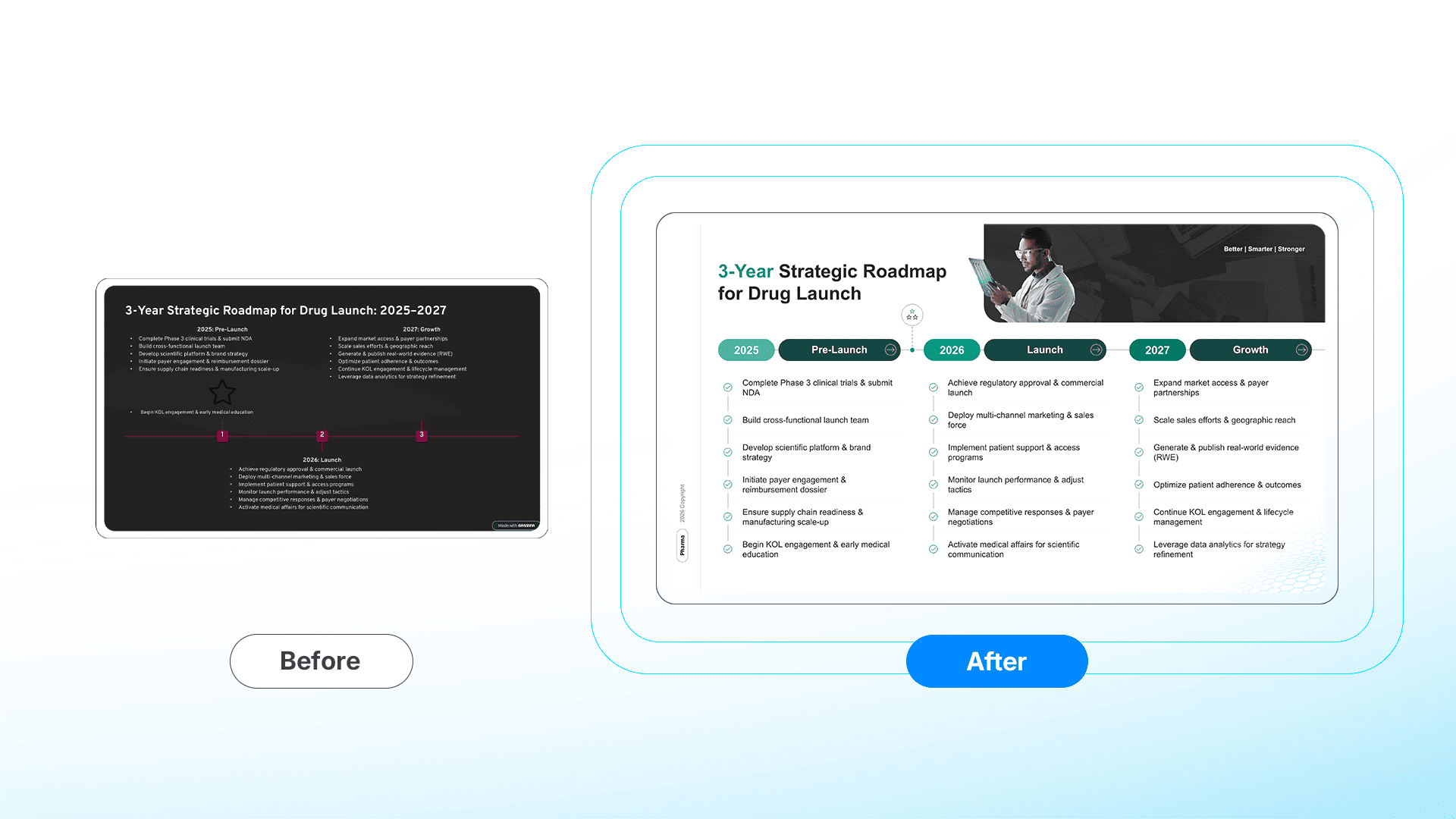

Case 2: Strategic Precision (The Launch Roadmap)

We took a generic timeline, where AI grid-lock forced critical icons out of place—and built a cohesive narrative.

- The AI/Raw State: A disjointed list where the tool's code prevented the "Go/No-Go" star from sitting precisely on the timeline axis.

- The Human Redesign: A strategic Launch Roadmap. The designer broke the grid to place the decision milestone exactly where it belongs temporally, creating a clear visual path from Phase III to Commercialization.

[🔍 View Full-Resolution Comparison]

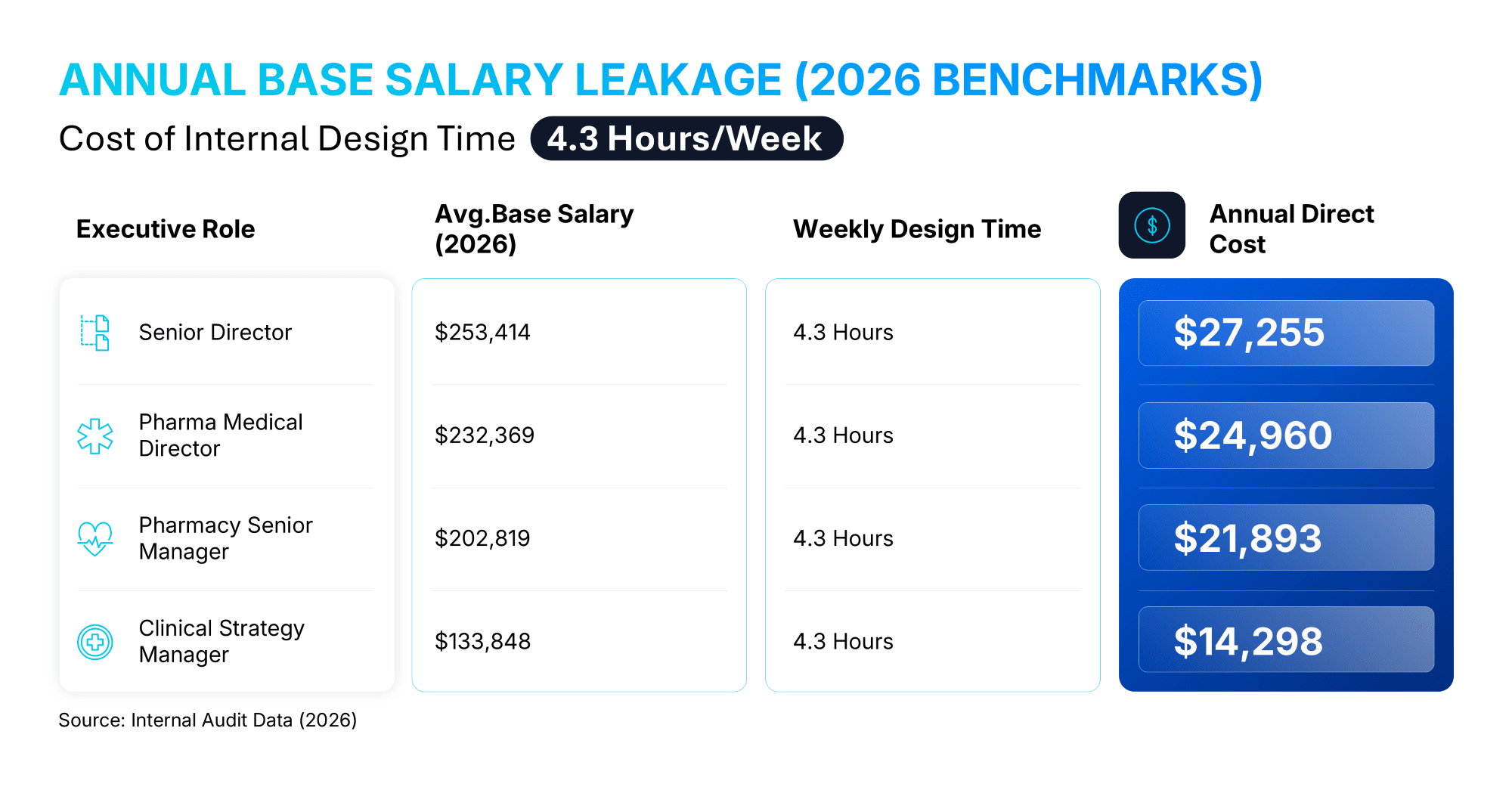

Quantify Your Organization's Salary Leakage

Is your team trying to fix broken AI exports manually? That is the most expensive way to design. The unmeasured cost of amateur design, or correcting "hallucinated" AI drafts, lies in the misallocation of human capital.

Based on our research involving over 1,000 pharmaceutical professionals, highly compensated Medical Directors and Senior MSLs dedicate an average of 4.3 hours per week to slide formatting and design-related tasks. This is not merely an annoyance; it is a financial drain.

In fact, each presentation carries a significant hidden cost: on average, individuals spend approximately 7 hours per presentation on design tasks alone.

Annual Base Salary Leakage (2026 Benchmarks)

When you apply these hours to 2026 compensation data, the cost of "cleaning up" slides becomes a quantifiable loss on the balance sheet:

Stop allocating high-value scientific capital to low-value formatting tasks. Use the 24Slides ROI Calculator to see exactly how much budget your team is losing to manual design and how much strategic bandwidth you can reclaim.

Explore the Pharma Efficiency Series:

Discover how specialized design empowers the rest of the commercial lifecycle, from brand management to field force execution:

- How 24Slides Empowers Pharma Brand Managers to Reclaim Strategic Velocity

- How Pharma Teams Eliminate the ‘Last-Minute’ Presentation Crisis

- Maintaining Pharma Brand Consistency in Global Markets

- Clinical Trial Data Visualization: Reducing Cognitive Load in Pharma Presentations

- Scaling for Launch: Design Agility in the 24-Hour Window

- How Professional Presentation Design Empowers Pharmaceutical Sales Reps to Engage HCPs More Effectively